Managing multiple puppet modules with modulesync

With the exception of children, puppies and medical compliance frameworks managing one of something is normally much easier than managing a lot of them. If you have a lot of puppet modules, and you’ll eventually always have a lot of puppet modules, you’ll get bitten by this and find yourself spending as much time managing supporting functionality as the puppet code itself.

Luckily you’re not the first person to have a horde of puppet modules that share a lot of common scaffolding. The fine people at Vox Pupuli had the same issue and maintain an excellent tool, modulesync that solves this very problem. With modulesync and a little YAML you’ll soon have a consistent, easy to iterate, on set of modules.

To get started with module sync you need three things, well four if you count the puppet module horde you want to manage.

- a file of repo names you want to manage

- a file of metadata containing how to issue the changes

- a directory of files to keep in sync

I’ve been using modulesync for some of my projects for a while but we recently adopted it for the GDS Operations Puppet Modules so there’s now a full, but nascent, example we can look at. You can find all the modulesync code in our public repo.

First we set up the basic module sync config in modulesync.yml -

---

git_base: 'git@github.com:'

namespace: gds-operations

branch: modulesync

...

# vim: syntax=yamlThis YAML mostly controls how we interact with our upstream. git_base

is the base of the URL to run git operations against. In our case we

explicitly specify GitHub (which is also the default) but this is easy

to change if you use bitbucket, gitlab or a local server. We treat

namespace as the GitHub organisation modules are under. As we never

push directly to master we specify a branch our changes should be pushed

to for later processing as a pull request.

The second config file, managed_modules.yml, contains a list of all

the modules we want to manage:

---

- puppet-aptly

- puppet-auditd

- puppet-goenvBy default modulesync will perform any operations against every module in this file. It’s possible to filter this down to specific modules but there’s only really value in doing that as a simple test. After all keeping the modules in sync is pretty core to the tools purpose.

The last thing to configure is a little more abstract. Any files you

want to manage across the modules should be placed in the moduleroot

directory and given a .erb extension. At the moment we’re treating all

the files in this directory as basic, static, files modulesync does

expand them provides a @configs hash, which contains any values you

specify in the base config_defaults.yml file. These values can also be

overridden with more specific values stored along side the module itself

in the remote repository.

Once you’ve created the config files and added at least a basic file to

moduleroot, a LICENSE file is often a safe place to start, you can run

modulesync to see what will be changed. In this case I’m going to be working

with the

gds-operations/puppet_modulesync_config

repo.

bundle install

# run the module sync against a single module and show potential changes

bundle exec msync update -f puppet-rbenv --noopThis command will filter the managed modules (using the -f flag to select them)

clone the remote git repo(s), placing them under modules/, change

the branch to either master or the one specified in modulesync.yml and then

present a diff of changes from the expanded templates contained in moduleroot

against the cloned remote repo. None of the changes are actually made

thanks to the --noop flag. If you’re happy with the diff you can add a

commit message (with -m message), remove --noop and then run the command again to

push the amended branch.

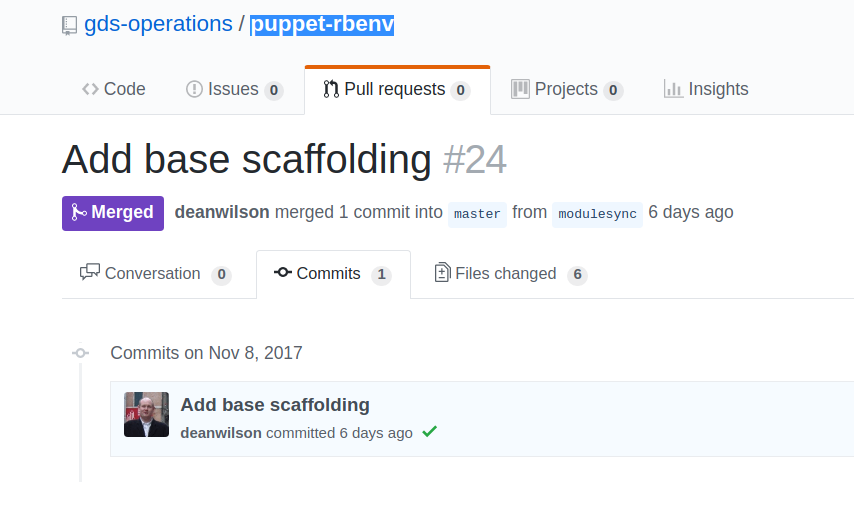

bundle exec msync update -m "Add LICENSE file" -f puppet-rbenvOnce the branch is pushed you can review and create a pull request as usual.

We’re at a very early stage of adoption so there is a large swathe of

functionality we’re not using so I’ve not mentioned. If you’re actually

using the moduleroot templates as actual templates you can have a local

override, in each remote module/github repo, that can localise the

configuration and be correctly merged with the main configuration. This

allows you to push settings out to where they’re needed while still

keeping most modules baselined. You can also customise the syncing

workflow to specify bumping the minor version, updating the CHANGELOG

and a number of other helpful shortcuts provided by modulesync.

Once you get above half-a-dozen modules it’s a good time to take a step back and think about how you’re going to manage dependencies, versions, spec_helpers and such in an ongoing, iterative way and modulesync presents one very helpful possible solution.